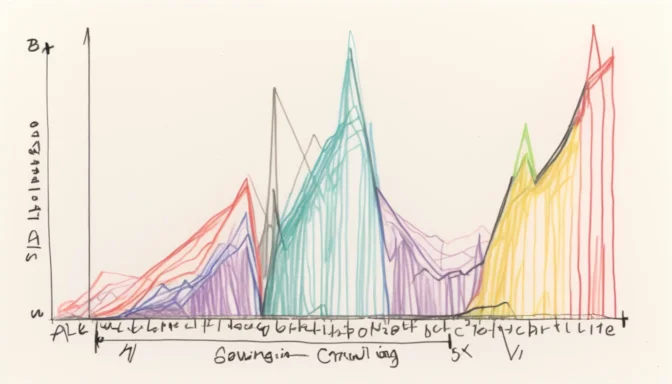

What is Crawling in a Search Engine?

In the realm of SEO, crawling is the systematic process by which search engine bots, commonly known as web crawlers or spiders, discover a website's content. This content can vary from text and images to videos, and is generally accessed through links.

How to Stop Search Engines from Crawling

You can prevent sections of your website from being crawled by search engines using a Robots.txt file. This text file guides web crawlers on which pages to skip, effectively removing them from the search engine index.

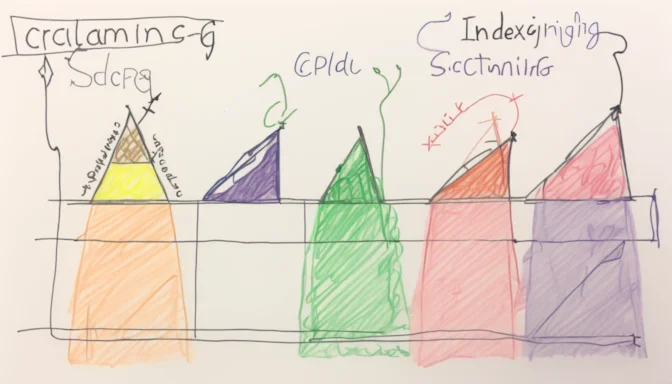

Crawling vs Indexing in Search Engines

Crawling and indexing are two distinct yet interconnected facets of SEO. Crawling involves search engine bots discovering publicly available web pages, whereas indexing is the process of storing and sorting this content for display in search results.

Google's Approach to Crawling

Within the context of Google, crawling involves Googlebot finding new or updated web pages. Although often confused with indexing, they are separate processes. Google employs algorithmic methods to decide which sites to crawl, how frequently, and the extent of pages to include.

Blocking Google Crawler

To restrict Google's crawler from accessing specific parts of your site, you can use a robots.txt file or other techniques to manage crawl traffic. This keeps certain content types, such as images or videos, from appearing in Google's search results.

Frequency of Search Engine Crawling

The rate at which search engines crawl a website can greatly vary. Some sites may be crawled in a matter of days, while others may take weeks. This frequency depends on various factors like website authority and frequency of content updates.

Which Search Engines are Effective at Crawling?

Googlebot is usually cited as an efficient web crawler, adept at quickly and accurately indexing web pages. However, it may not cover every page on large or complex websites. As a result, understanding the concept of crawl budget and optimization is essential for site owners.

E-Commerceo

E-Commerceo