Best Practices for Robots.txt

To ensure effective utilization of robots.txt, it's vital to perform periodic checks. Place the robots.txt file in your website's root directory, and be meticulous about the capitalization of directory and subdirectory names.

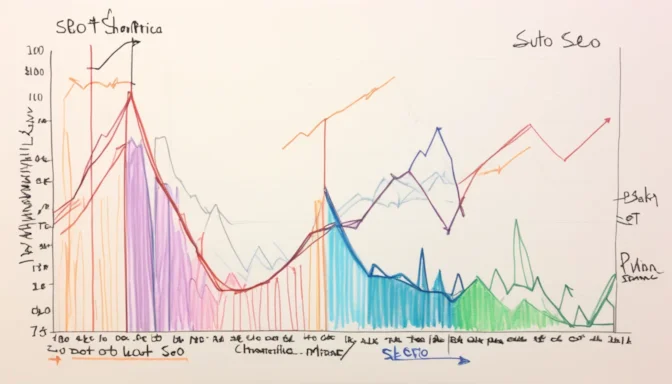

Is Robots.txt Good for SEO?

The disallow rules in a robots.txt file can be a powerful tool when used correctly. For many sites, excluding specific URL patterns from search engine crawlers is essential for optimal SEO. However, improper use can harm your site's search engine performance.

Role of Robots.txt in SEO

A robots.txt file guides search engine crawlers on which URLs can be accessed on your site. While it helps prevent server overload, it's not a method for keeping pages out of search engine indexes. For that, use 'noindex' or password protection.

What to Block in Robots.txt

Use the robots.txt file to prevent indexing of private photos, expired offers, or other non-public pages. This can assist your SEO efforts by focusing crawlers on more valuable content.

Size Limitations of Robots.txt

Google imposes a size limit of 500 KiB on robots.txt files. Any content beyond this size is ignored, so it's crucial to keep your file within this limit.

Is Robots.txt a Security Risk?

The robots.txt file itself is not a security vulnerability, but it can be exploited to identify restricted areas of a website. Exercise caution when specifying what to block.

Impact on Google Analytics

Using robots.txt to block bots can affect your Google Analytics data. It keeps unwanted bots away, providing a cleaner analytics report.

Is Bot Traffic Bad for SEO?

Not all bot traffic is detrimental to SEO. Some bots, like Google's search bots, are essential for site visibility and usability. It's crucial to differentiate between beneficial and harmful bots.

Advantages of Using Robots.txt

A well-configured robots.txt file can prevent duplicate content issues and guide search engine crawlers more efficiently. This leads to better indexing and, ultimately, improved SEO performance.

E-Commerceo

E-Commerceo